The honest answer to “will AI replace underwriters?”

Will AI replace underwriters? No. The carrier that thinks the answer is yes will lose money in 2026 - fast. The carrier that thinks the answer is “AI is just hype” will lose money slower, but lose it just as surely.

In my experience working with carrier boards across specialty and commercial lines, the right framing is the one that has succeeded across every CUO engagement I’ve been part of for the last decade: AI in underwriting is augmentation, not replacement. AI-powered underwriting absorbs the 30 to 40 percent of underwriter time currently spent on data rekeying and submission triage - and redirects that human judgment to the risks that actually need it. AI underwriter augmentation is the strategy; AI based underwriting tools are the components.

The numbers are clear. WTW’s March 2026 Advanced Analytics & AI Survey of 59 P&C insurers in the U.S. and Canada found that insurers using more sophisticated analytics achieved combined ratios six percentage points lower and premium growth three percentage points higher than slower adopters between 2022 and 2024. That is a meaningful spread. It also tells you that the carriers who built AI-augmented underwriting between 2022 and 2024 are now compounding the advantage.

This guide is the 2026 refresh of our original answer to the existential question. It is written for a Chief Underwriting Officer at a U.S. P&C carrier who needs a defensible, NAIC-compliant, vendor-neutral playbook for deploying AI underwriting capabilities - and who wants to stop hearing the word “transformation” without seeing a number to anchor it. It complements the broader underwriting workbench guide.

The retirement cliff is real - and AI is the only realistic response

In my experience advising boards, the AI-in-underwriting conversation only stops being abstract when the CUO confronts the workforce math. The math is brutal.

The 50% retirement cliff

The National Association of Mutual Insurance Companies projects up to 50% of current underwriters will retire by 2028, and fewer new professionals are entering the field. The senior underwriters carrying a carrier’s institutional risk knowledge - the ones who can look at a Tampa restaurant submission and know in 30 seconds whether the appetite is right - are leaving faster than the carrier’s training pipeline can replace them.

I worked with a Midwest commercial lines carrier whose underwriting bench had 23 senior underwriters. Eleven announced retirement within an 18-month window. Their replacement plan, before AI was on the table, was to hire 15 junior underwriters and accept a two-to-three-year ramp. The CUO ran the numbers and realized the ramp would cost roughly five points of combined ratio over those years from inconsistent risk selection alone - before accounting for market share lost when broker turnaround slipped.

The AI math that finally caught up

McKinsey’s foundational research observed that 30 to 40 percent of an underwriter’s time is spent on administrative tasks such as rekeying data or manually executing analyses. That number has held up across multiple replications. Even before the talent cliff, between a third and almost half of every underwriter’s day is the wrong work for a professional with 25 years of judgment.

If AI in underwriting absorbs even half of that administrative load, you free up the equivalent of roughly one in six underwriter FTEs without firing anyone - and you do it specifically for the work that machines do better: structured data extraction, document parsing, pattern detection across submission histories.

Why 2026 is different from 2024

Three things changed. First, large language models matured: over half of WTW survey respondents now use LLMs and generative AI in production, with another 29% planning adoption within the next two years. Second, the regulatory framework crystallized: 24 states plus DC adopted the NAIC Model Bulletin on AI by mid-2025, and the AI Systems Evaluation Tool pilot launched in January 2026. Third, the buyer math shifted: WTW shows only 16% of P&C insurers currently use AI to augment human underwriting, but 60% plan to prioritize it between now and 2028. Carriers acting in 2026 are getting in before the surge.

What AI actually does in underwriting today (and what it doesn’t)

In my experience, the conversations that go off the rails are the ones that treat AI as one thing. AI in underwriting is six different categories of technology, each doing different work, with different governance demands.

The four things AI does well right now

Document parsing and submission intake. Large language models read ACORD forms, broker emails, loss runs, schedules of values, and prior policies - and produce structured data fields ready for the workbench. This is the highest-ROI use case in commercial P&C because submission intake is also the biggest time sink. A 1,500-page attending physician statement that took an underwriter most of a day to review can be summarized to actionable notes in minutes.

Risk scoring on structured data. Gradient boosting machines remain the application of choice for underwriting scoring and fraud detection due to their superior predictive power over generalized linear models. These models produce a probability score that the underwriter - or the rules engine - can act on.

Pattern detection across portfolio. AI in underwriting is exceptional at finding correlations across thousands of bound policies that no human could surface - emerging concentration exposures, class-code anomalies, telematics outliers, geospatial accumulation.

Fraud signal generation. Pre-bind fraud flags from unstructured text, prior carrier patterns, and external data are now production-grade and feed directly into referral logic.

The three things AI does not do

AI does not exercise judgment. It cannot decide that a particular Tampa restaurant with prior cancellation deserves a second look because the new ownership has a credible turnaround story. That is what underwriters do.

AI does not produce explainable decisions on its own. It produces a score or a draft. Without an explainability layer (SHAP, LIME) and a rules engine that translates the score into a policy outcome, the AI output is unauditable - and therefore not deployable in a NAIC-regulated environment.

AI does not replace the relationship layer. Brokers do business with people. The underwriter who knows the specialty broker by name, who knows their book, who can call them back about a difficult risk - that relationship is the moat AI cannot cross. The carriers that try to automate broker relationships discover the broker has placed the renewal elsewhere.

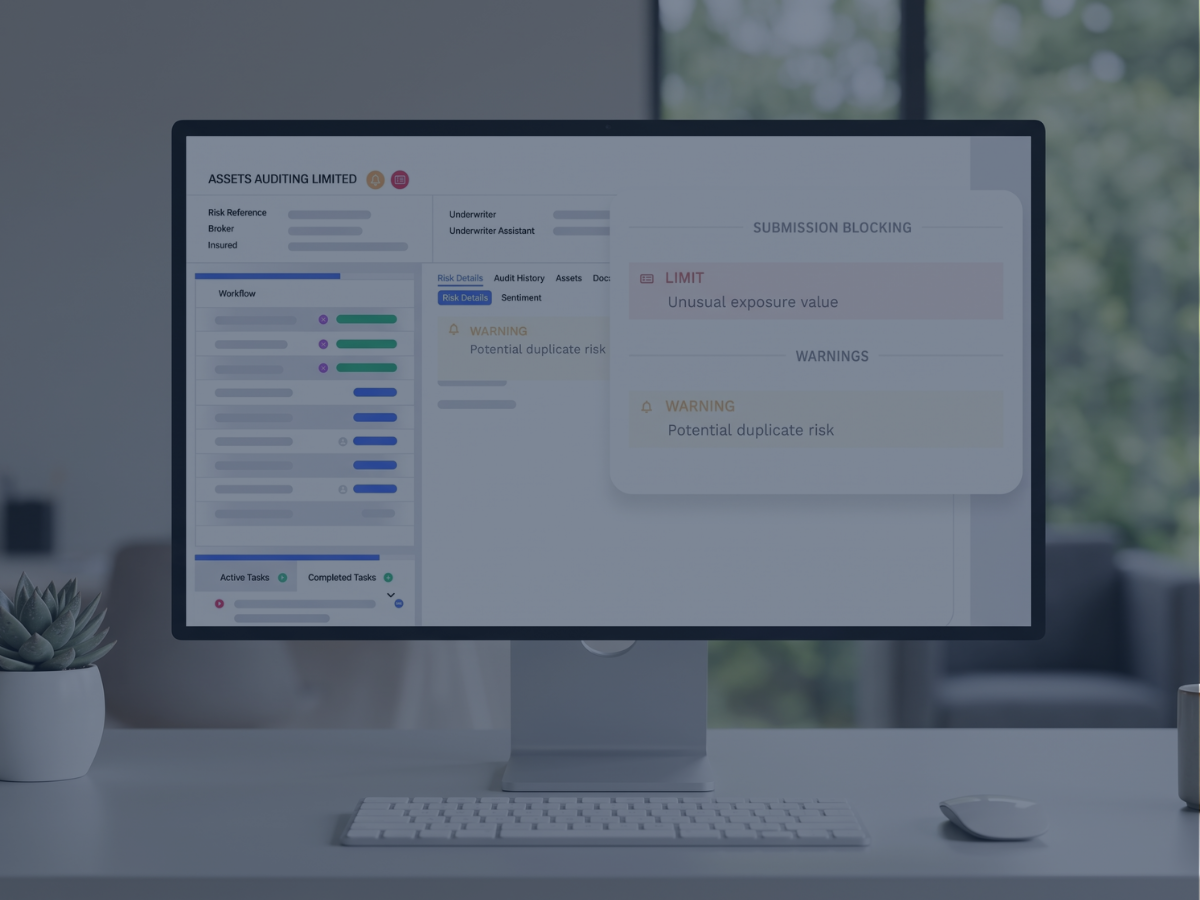

Why the rules-plus-ML hybrid is the 2026 architecture

The right architecture for AI in underwriting in 2026 is neither pure ML nor pure rules. It is rules-plus-ML hybrid, with the underwriting rules engine as the orchestration layer. The ML model produces a score; the rules layer decides whether the score is high enough to bind, low enough to decline, or borderline enough to refer. The rules layer also handles guardrails — confidence-interval thresholds below which a score is not used, override patterns when an underwriter disagrees, and the audit trail recording why a score produced a decision.

Five high-impact AI augmentation use cases for P&C

I’d require any AI-in-underwriting program I advise on to start with one of the five use cases below - not all of them. Trying to deploy all five at once is the surest way to stall a program in pilot purgatory.

Use case 1: Submission intake and pre-fill

The single highest-ROI starting point. AI extracts ACORD form fields, prior policy data, loss run line items, schedules of values, and broker-email context, and pre-fills the workbench submission record. Underwriters arrive at a submission already populated - their job becomes verification and judgment, not data entry.

This use case alone accounts for most of the 30 to 40 percent administrative-task figure in commercial P&C. The carriers I’ve worked with in commercial property and specialty lines see submission processing time drop from hours to minutes per account in the first 90 days.

Use case 2: Risk scoring on structured data

A gradient-boosting or random-forest model trained on bound-policy outcomes (loss ratio, frequency, severity by class) produces a per-submission risk score. The score is not the decision - the underwriter or the rules engine is. It is a triage signal.

I recommend every CUO require their data science team to demonstrate model performance against a holdout set from at least 18 months of bound policies, broken down by line, class, and geography. A model that performs in aggregate but fails on a specific specialty segment is not deployable on that segment.

Use case 3: Fraud signal generation and pre-bind screening

Pattern detection across submission text, prior carrier data, and external data sources flags submissions with elevated misrepresentation or moral-hazard indicators. The flag is a referral trigger, not a decision. An underwriter reviews and either binds with conditions or declines with documented reasons.

The same infrastructure pulls double duty if the carrier integrates a claims AI system - pre-bind fraud signals correlate strongly with downstream claims behavior.

Use case 4: Pricing draft and rate-table interpolation

For commercial lines where the actuarial pricing model has gaps (new classes, novel exposures, sparse data), AI in underwriting can produce a draft price the underwriter accepts, modifies, or rejects. This is where the rules-plus-ML hybrid matters most. The AI draft is one input into a pricing rules engine; the engine combines the draft with actuarial rate tables, schedule modifications, and underwriter authority overrides to produce the bound price.

Use case 5: Portfolio monitoring and concentration alerting

A portfolio-management agent monitors concentration risk across geography, industry, and peril type, alerting underwriters and the CUO before accumulations become problematic. This is where predictive analytics in insurance underwriting earns its name - the model is forecasting future loss exposure, not just describing the current book. The use case delivers the highest combined-ratio impact in catastrophe-exposed lines (commercial property, marine, energy).

The carriers I’ve advised on portfolio AI build the monitoring layer once and plug it into both the underwriting workbench and the reinsurance team. The same data foundation supports both - and it is the foundation of every automated underwriting software architecture I’d recommend to a CUO planning past 2027.

Human-in-the-loop architecture - the boundary between AI and underwriter judgment

The single design decision that separates a successful AI-in-underwriting deployment from a stalled one is where the boundary sits between AI decision and human judgment. In my experience, this is the human in the loop architecture choice - and I’ve seen carriers get it wrong in both directions.

The over-automation failure mode

Carriers under pressure to show productivity gains push the boundary too far toward AI. The workbench auto-binds 65 percent of submissions, the underwriter handles the remaining 35 percent - and within 18 months, loss ratio drifts up because the auto-bind population includes risks that should have been referred. The reinsurance team notices first, and the conversation that follows is uncomfortable.

The under-automation failure mode

Carriers nervous about regulatory exposure push the boundary too far toward humans. AI produces a score, but every submission still gets the full underwriter review. The carrier has bought AI infrastructure and gotten back none of the productivity. The CFO notices, and the AI program stalls.

The pattern that actually works

The boundary I recommend follows three rules. First, AI handles the data work, humans handle the judgment work - pre-fill, scoring, flagging, draft pricing on the AI side; binding decisions, override approvals, and broker conversations on the human side. Second, referral rates land between 15% and 30% for a mature commercial lines book - much above and you have not solved the productivity problem; much below and you have over-automated. Third, every override is captured in the audit trail with a reason - for compliance and for retraining the model.

The carrier I worked with that got it right

I worked with a Northeast specialty carrier that deployed AI submission intake on commercial property. Pre-deployment baseline: 11 days from broker submission to bound policy. Post-deployment, 12 months in: 36 hours for the 70% of submissions in their core appetite; the remaining 30% took 4 to 6 days as senior underwriters worked them with full pre-fill and scoring support. Combined ratio on migrated classes improved 3.8 points. The CUO did not reduce headcount - he redeployed two senior underwriters to a new specialty class he had wanted to enter for two years.

That is the right shape of the answer. AI in underwriting did not replace anyone. It made the team larger by making each underwriter more effective.

Explainability and audit trail - because NAIC AI Bulletin is now real

The 2024 conversation about AI in underwriting was about whether it worked. The 2026 conversation is about whether you can explain how it worked. The shift matters because the regulator now asks.

What the NAIC AI Bulletin requires

The 2023 NAIC Model Bulletin requires a written AI Systems Program covering governance, transparency, and accountability. It notes that insurers may be asked, including via document production, about development and use of AI, with focus on governance, risk management, and internal controls. The AI Systems Evaluation Tool pilot launched in January 2026 operationalizes that examination capability.

For an AI-in-underwriting program, the bulletin’s practical implications are documentation of the model, the training data, the validation regime, and per-submission decision evidence. Every score, every rules-engine decision, every underwriter override - all logged, all retrievable, all defensible to a state DOI examiner.

The state-by-state layer

Three states moved beyond the NAIC framework. New York’s DFS Circular Letter 2024-7 requires insurers to demonstrate that AI and external data systems do not proxy for protected classes. Colorado Revised Statutes §10-3-1104.9 imposes quantitative disparate-impact testing, with private passenger auto and health benefit plans added effective October 15, 2025. California restricts purely automated decisions in health-care contexts. Carriers writing in any of those plus the NAIC adopting states should plan governance against the highest applicable standard.

The most recent industry-wide signal came in March 2026: Crowell & Moring noted the NAIC’s AI Issue Brief, the multistate Evaluation Tool pilot, and continued Bulletin adoption signal that AI governance is a present regulatory obligation.

Explainable AI in practice

Methods like SHAP (Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations) translate model outputs into human-readable reason codes. The reason codes are what an examiner sees when they ask “why was this risk priced 18 percent above book average?” The answer should be a concrete attribution: prior loss frequency in the class, occupancy code, distance to coast, prior carrier non-renewal - each with its weight.

Without an explainability layer, an AI model is a black box. A black box does not pass the regulatory compliance test for an NAIC-aligned market conduct exam. With an explainability layer plus a rules-engine audit trail, the same model becomes a defensible production tool.

What the audit trail must contain

For each scored, declined, referred, or bound submission, the audit record should capture the model version, input features, the score produced, SHAP-style attribution per feature, the rules-engine outcome, the underwriter who approved any override with reason, and timestamps. From a per-policy view, an examiner should reach the full chain in two clicks or fewer.

Building a phased AI augmentation roadmap (3 to 18 months)

The carriers that succeed at AI in underwriting do not deploy in one big bang. They phase. In my experience, the roadmap that works has three phases, each with a clear success metric.

Phase 1 (months 1–3): Submission intake pre-fill on one line of business

Pick the line with the highest broker-email submission volume and the most structured ACORD intake. Personal property, commercial property, and BOP are common starting points. Goal: AI extracts at least 85% of fields with 95% accuracy on a held-out submission set. Success metric: median underwriter time per submission drops by at least 30%.

Phase 2 (months 3–9): Risk scoring on the same line

Build a gradient-boosting risk model trained on 24 to 36 months of bound-policy outcomes for that line. Validate on a holdout. Deploy in shadow mode (model produces score, underwriter does not see it) for 60 days. Compare model score against underwriter outcome. Goal: model produces actionable triage (high/medium/low) that aligns with eventual loss ratio rank order. Success metric: top-quintile risk-score submissions show meaningfully better loss ratio than bottom-quintile, with statistically significant separation.

Phase 3 (months 9–18): Rules-engine integration and STP

Integrate the AI score into the underwriting rules engine. Deploy straight-through-processing for the lowest-risk decile, with full audit trail and underwriter override capability. Refer the borderline 30%. Decline with reason logged. Success metric: STP rate of 30 to 50% on the line, broker turnaround time below 24 hours, combined ratio improvement of at least 1.5 points within 12 months of full deployment.

What this roadmap does not include

It does not include rolling out across all lines simultaneously. It does not include a “platform decision” before phase 1 has produced data. It does not include vendor lock-in to a single AI provider - the rules engine should be vendor-neutral so that the AI components can be swapped as the market matures. It does not include claims AI, customer service AI, or marketing AI - those are different programs with different governance.

In my experience, the carriers that try to do all of this in 12 months end up doing none of it. The carriers that do it in three sequenced phases over 18 months end up with a production-grade AI-in-underwriting program their CUO can defend to the board, the reinsurer, and the state DOI.

Vendor evaluation - what to require from AI underwriting solutions

I’ve sat in maybe 25 vendor evaluations for AI underwriting solutions, and the failure modes are remarkably consistent. The questions below are ones I’d require any AI vendor to answer in writing - and a serious vendor will welcome them.

Required capabilities

The vendor should demonstrate, on submissions from a real line you write, that their model achieves measurable lift over your current process; that it produces SHAP-style reason codes per decision; that the audit trail captures inputs, model version, score, and outcome per submission with NAIC-aligned formatting; that the engine integrates with at least three external data providers your portfolio depends on (ISO, CLUE, D&B, MVR, telematics, ACORD); and that latency is sub-second for score generation.

The Datos Insights Top Trends in P&C 2026 report identifies composable rating environments and modular underwriting workbenches as a 2026 competitive differentiator the architecture you want is one where the AI component is replaceable as the market evolves.

Specialty lines and explainability

A model trained primarily on personal lines data will not perform on E&S property, professional liability, or marine. I’d ask any vendor: how many of your current customers write the line I write? What is the holdout-set performance on that line? What does your reference customer’s audit trail look like under a market conduct exam? Silence or generalities are a signal.

Model governance

The vendor must document model lineage - which dataset trained the model, when, with what target, with what validation. They must support periodic retraining with versioning, provide drift-monitoring alerts, and commit in writing to who owns model decisions when they go wrong.

Total cost over five years

Pricing comes in three flavors: per-seat, per-decision, and platform-fee with implementation. I’d model TCO over five years against projected submission volume and growth. The cheapest year-one license is rarely the cheapest five-year TCO when the model is retrained twice and your portfolio mix shifts.

Reference customer interviews

Ask for three reference customers - and insist on at least one who left the vendor. Current customers tell you what works. A former customer tells you where it breaks. If the vendor refuses, that is information.

Vendor evaluation matrix for AI underwriting

FAQ - AI in underwriting

Will AI replace underwriters jobs in the next five years?

No. The consensus across primary research (WTW 2026, McKinsey, BCG) and across every CUO engagement I’ve worked on is that AI augments underwriters - it does not replace them. Personal lines and small-business underwriting will continue to automate further, with humans overseeing portfolios rather than rekeying documents. Complex commercial and specialty risks will continue to require human judgment, with AI providing pre-fill, scoring, and decision support.

What does AI do in underwriting right now in 2026?

AI in underwriting handles four high-impact tasks: document parsing and submission pre-fill, structured-data risk scoring (typically gradient-boosting models), pattern detection for fraud and concentration risk, and draft pricing for novel exposures. It does not exercise judgment, produce explainable decisions without a separate explainability layer, or replace the broker relationship.

How much underwriter time can AI augmentation actually save?

McKinsey’s foundational research finds 30 to 40 percent of underwriter time is spent on administrative tasks. Capgemini’s 2024 study put it at 41 percent. AI submission intake typically captures the majority of that time - meaning a well-deployed program effectively expands underwriting capacity by one underwriter for every five to seven on the team, without hiring.

Does AI improve combined ratio for P&C insurers?

The WTW 2026 survey of 59 P&C insurers found insurers using sophisticated analytics achieved combined ratios six percentage points lower and premium growth three percentage points higher than slower adopters between 2022 and 2024. The mechanism is better risk selection at scale, faster broker turnaround that wins better submissions, and reduced premium leakage from misclassification.

How does AI in underwriting comply with NAIC AI Bulletin?

The NAIC Model Bulletin requires a written AI Systems Program covering governance, transparency, and accountability - including documentation of decision logic, model validation, and decision-level evidence on regulator request. An AI underwriting program complies by combining model lineage documentation, SHAP-style explainability per decision, and a per-submission audit trail captured in the rules engine that orchestrates the AI score into the bind/decline/refer outcome.

What is the difference between rules-based and AI underwriting?

Rules-based underwriting executes deterministic logic that you wrote - eligibility tests, pricing factors, declination conditions - with explainable, auditable outcomes. AI underwriting produces statistical scores or pattern detections from training data. The 2026 P&C architecture is rules-plus-AI hybrid: AI produces a score or pre-fill, the rules layer decides what the score means and handles the guardrails.

Can AI explain its underwriting decisions to regulators?

Not by itself. AI models produce scores, not explanations. Methods like SHAP and LIME translate the model output into human-readable reason codes - “this submission scored 0.72 because of prior loss frequency, occupancy code, and distance to coast, with these weights.” The reason codes are what a state DOI examiner sees during a market conduct exam, and they are the operational compliance standard for NAIC AI Bulletin states.

Talk to Decerto and Higson

The retirement cliff is not 2028’s problem - it is 2026’s problem, because the carriers building AI augmentation now will spend 2027 in production while their slower competitors are still in vendor selection. The CUOs I work with who delayed AI deployment past 2026 are now running 18-month catch-up programs against carriers who started in 2024.

A 30-minute portfolio assessment with me is how the work I do with carrier boards usually starts. Vendor-neutral. Your portfolio data, your gaps, your timeline. If Higson plus a partner AI vendor is not the right answer for your carrier, I will tell you that. The first call is a technical Q&A with me and a senior architect, NDA-protected, no sales pitch and no demo loop.

What you get out of the assessment: a documented inventory of where your underwriting decisions currently live (PAS, Excel, PDFs, underwriter judgment), a gap analysis against NAIC AI Bulletin requirements specific to your states of admission, a phased roadmap matching the three-phase pattern in Section 7, and a TCO and combined-ratio impact estimate grounded in the carriers I’ve advised in adjacent classes. Plus a free Higson sandbox so your team can configure rules that consume an AI score and produce a defensible bind/decline/refer decision - all with the audit trail your compliance team can take to a state DOI.

Two reference customers I would point you to before any conversation. A Northeast specialty carrier that deployed AI submission intake on commercial property and saw bound-policy turnaround drop from 11 days to 36 hours on 70% of submissions, with combined ratio improvement of 3.8 points across migrated classes - named under NDA on request. A regional commercial carrier whose senior underwriter capacity expanded by the equivalent of 2.4 FTEs through pre-fill alone, redeployed to a new specialty class.

You can also download the NAIC AI Model Bulletin Compliance Checklist for Underwriting - the same checklist I use in board-level assessments — before any conversation with me.

Calendar a 30-minute portfolio assessment with Mariusz Zagajewski - direct calendar slot, no form, no demo loop.

Explore Higson, Decerto’s Underwriting Workbench, and AI for Insurance product pages.

Sources:

- WTW - Insurers Using Advanced Analytics and AI Report Strong Returns on Investment and Premium Growth (2026 Advanced Analytics & AI Survey, March 2026).

- McKinsey & Company - From art to science: The future of underwriting in commercial P&C insurance.

- Insurance Thought Leadership - The New Rules of Underwriting (citing NAMIC retirement projection of 50% of underwriters by 2028).

- Quarles & Brady LLP - Nearly Half of States Have Now Adopted NAIC Model Bulletin on Insurers’ Use of AI (March 2025).

- Holland & Knight - The Implications and Scope of the NAIC Model Bulletin on the Use of AI by Insurers (May 2025).

- Buchanan Ingersoll & Rooney - When Algorithms Underwrite: Insurance Regulators Demanding Explainable AI Systems (October 2025).

- Crowell & Moring - NAIC Intensifies AI Regulatory Focus: What Insurers Need to Know (March 2026).

- Datos Insights - Top Trends in Property and Casualty 2026: Building the Intelligence-Ready P/C Carrier.

.avif)